AI is already in your business. The real question is whether your governance has caught up.

Most CISOs feel the same tension right now. Boards want assurance, business teams want speed, and regulators are moving from curiosity to scrutiny. At the same time, AI is spreading through vendors, copilots, workflows, and product features faster than many organizations can inventory it.

The National Institute of Standards and Technology (NIST) released AI Risk Management Framework (AIRMF) 1.0 in January 2023 as a voluntary, rights-preserving, non-sector-specific framework for managing AI risk. It gives CISOs a credible structure for thinking about governance. What it does not provide is a ready-made operating model.

That gap is where most programs stall. A CISO does not need another abstract framework summary. What you need is a practical way to map AI governance to this model, assign ownership, produce evidence, and keep AI adoption within your organization’s risk appetite.

“That is what effective AI governance should accomplish. It should help the business move faster while staying inside clear guardrails.” — Reet Kaur

The framework organizes AI oversight into four functions: Govern, Map, Measure, and Manage. These functions help CISOs translate AI risk from technical concerns into governance decisions that boards and executives can oversee.

In this article, we will explain these functions in plain language, show how they map to real governance work, and outline the core artifacts that make oversight credible.

Real World Situation

Last month, a board member asked a newly hired CISO at a SaaS company a simple question: “Where is AI already in our environment?” The CISO had a partial answer for internal tools, no answer for embedded vendor features, and no agreed owner for approvals. Nothing was on fire, but the governance gap was obvious.

That is where this work starts.

If you want help turning this into a working program, not just a slide deck, start with Sekaurity’s AI governance and compliance support.

Why AI Risk Feels Different to a CISO

The hardest part of AI governance is not that the risks are mysterious. It is that the risks are distributed across many parts of the organization.

AI risk does not live in just one place. It can appear in data pipelines, model supply chains, third-party models, developer tools, employee workflows, vendor platforms, and customer-facing products. Many organizations now depend on pretrained models, external APIs, and embedded AI features they did not build themselves.

NIST highlights this in the AI RMF. AI risks differ from traditional software risks because of changing data, opaque models, pre-trained components, emergent behaviors, privacy exposure, and hard-to-predict failure modes.

That matters for CISOs because the control surface is broader than infrastructure and identity.

In practice, AI risk shows up in a few familiar ways:

- Employees paste sensitive content into external AI tools

- Product teams enable AI features before governance gates exist

- Procurement buys AI-enabled vendors without reviewing data handling practices

- Security teams measure model performance, but not trustworthiness, privacy, or fairness

- Boards and customers ask for evidence the organization cannot produce quickly

This is why AI governance should not be treated as a policy-only exercise. It is a cross-functional operating system for decisions, escalation, review, and evidence.

The market pressure is not slowing down. The World Economic Forum’s Global Cybersecurity Outlook 2025 reports that 66% of organizations expect AI to impact cybersecurity, but only 37% have processes to assess AI tool security before deployment. That is the exact gap a CISO is being asked to close.

How the Four Functions Translate Into Real Governance Work

Those four functions sound simple on paper. In practice, many organizations struggle to translate them into day-to-day governance decisions.

For a CISO, the simpler translation looks like this:

|

AI RMF Function |

Plain-English Meaning |

CISO Question |

|---|---|---|

|

Govern |

Set the rules, ownership, and review model |

Who decides, who approves, and what evidence do we keep? |

|

Map |

Understand the AI use case and context |

What is this system for, where is it used, and what could go wrong? |

|

Measure |

Test and monitor trustworthiness and risk |

How do we know it is secure, reliable, private, and within tolerance? |

|

Manage |

Prioritize treatment and take action |

What do we fix first, what do we accept, and when do we stop or deactivate? |

NIST also emphasizes that governance is a cross-cutting function infused throughout the other three. That point gets missed.

Many teams jump into tooling, testing, or use-case reviews before agreeing on decision rights. Then every later step becomes slower, more political, and harder to defend.

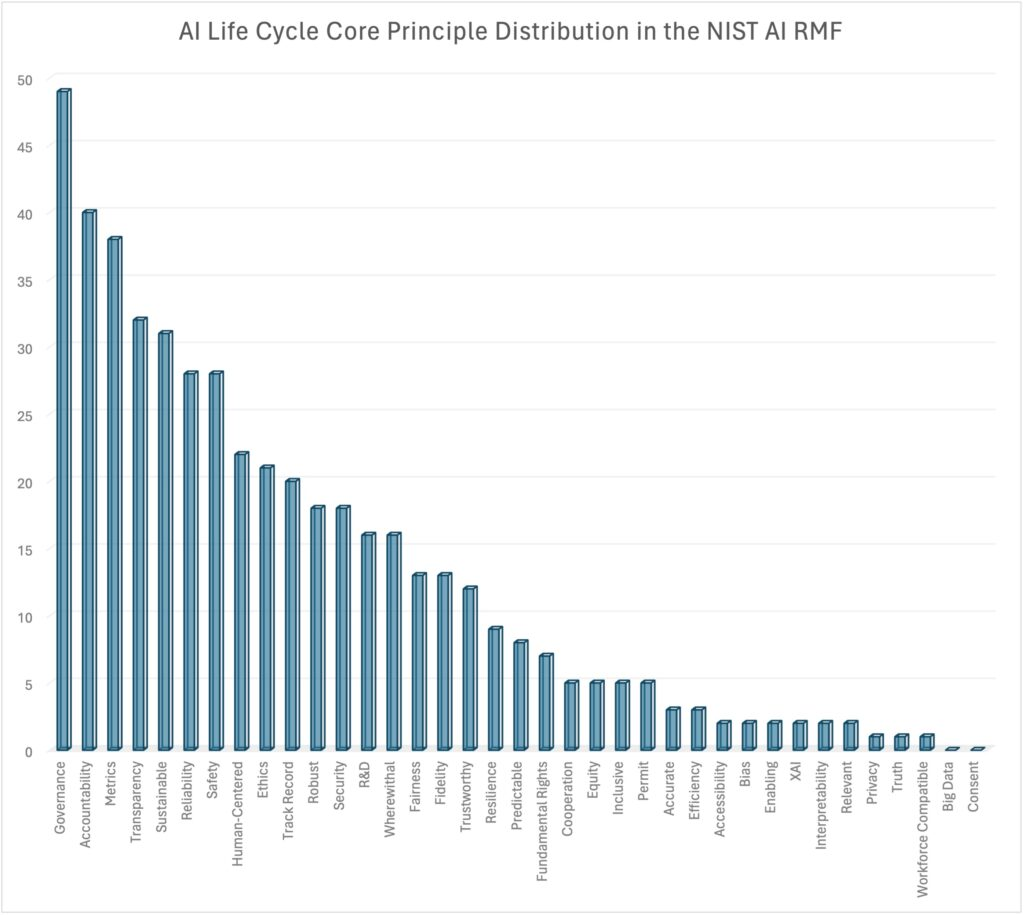

Research from Stanford Law’s CodeX program reinforces this idea. Their analysis of the framework makes a useful observation that governance has a strong correlation to more than half of the RMF tasks across the AI RMF lifecycle.That reinforces a simple point. Governance is foundational, not administrative overhead.

Source: Stanford Law (Link)

So if you are leading NIST AI RMF implementation, do not start with a giant control spreadsheet. Start with authority, accountability, and evidence.

NIST AI RMF Implementation Starts With Governance

The first step in implementing the NIST AI RMF is to establish a governance model the business can actually operate.

The GOVERN function covers legal and regulatory understanding, trustworthy AI characteristics in policy, risk tolerance, transparency, monitoring, inventory, accountability, leadership responsibility, external engagement, and third-party risk.

That list can feel overwhelming. In practice, most CISOs can make it manageable by focusing on five foundational decisions.

1. Define AI Risk Appetite

Your organization already makes tradeoffs between speed, cost, exposure, and oversight. AI just makes those tradeoffs more visible.

An AI risk appetite statement should answer questions like:

- Which AI use cases need pre-approval?

- Which data types cannot be used in external AI tools?

- What level of human oversight is required for material decisions?

- Which vendor AI features trigger legal, privacy, or security review?

- What evidence must exist before production deployment?

This is where your executive and board advisory process becomes critical. If the board cannot understand the risk boundaries, it cannot govern them. Good board oversight of AI starts here.

2. Create an AI Governance Committee

This should be small enough to move quickly and senior enough to make decisions.

In most organizations, that means representation from:

- Security

- Legal

- Privacy or compliance

- Product or engineering

- Procurement or third-party risk

- A business owner for the relevant use case

The committee’s purpose is not to review every prompt. It should set policy, approve high-risk use cases, resolve tradeoffs, and review exceptions.

Real World Situation

Recently, in a tabletop exercise, we pulled together security, legal, product, compliance, and procurement for a 45-minute standing AI governance meeting. Very quickly, the team surfaced three unreviewed AI-enabled vendors.

They also found one internal copilot pilot using sensitive customer data and a customer questionnaire that already asked for AI oversight evidence. None of those issues required panic. All of them required ownership.

3. Assign Decision Rights

This is where many AI governance efforts fail.

You need clear answers to who can:

- Approve internal AI pilots

- Approve third-party AI-enabled vendors

- Sign off on high-risk production use cases

- Accept residual risk

- Trigger incident escalation

- Deactivate or suspend an unsafe system

If this is vague, governance becomes negotiation by email.

4. Build an AI Inventory

The framework explicitly calls for mechanisms to track AI systems and use cases in the GOVERN function.

A meaningful AI inventory should include more than internally developed models. It should capture both internal and vendor-embedded AI.

At minimum, an inventory should include:

- Use case name

- Business owner

- System or vendor name

- Data used or exposed

- Decision impact level

- Human oversight requirement

- Review status

- Third-party dependency

- Monitoring owner

This is also where AI risk assessments and reviews start to create real value. You cannot govern what you have not identified.

5. Decide What Evidence Counts

For every meaningful AI use case, define the evidence package required for approval.

This package should contain the artifacts that demonstrate the system was reviewed, approved, and monitored appropriately.

Typical evidence includes:

- Risk assessment summary

- Approval record

- Data handling notes

- Vendor review outcome

- Testing and validation record

- Monitoring plan

- Escalation or rollback plan

Together, these artifacts provide the evidence that governance decisions were made and risks were evaluated.

That is how governance becomes defensible.

How to Map AI Governance Activities to the Four AI RMF Functions

Once governance exists, you can map the real work.

GOVERN: Build the Operating System

Use GOVERN when you are establishing policy, roles, transparency, review cadence, inventory, and third-party controls.

Typical CISO-owned or CISO-led governance activities include:

- Drafting the AI usage policy

- Defining review gates for internal and vendor AI use

- Setting committee cadence and escalation paths

- Defining AI risk tolerance with executive leadership

- Requiring inventory and documentation standards

- Ensuring deactivation authority exists for unsafe systems

If your current AI governance work feels scattered, this is usually the missing layer.

MAP: Establish Context Before Approval

MAP is where you define the intended purpose, users, benefits, costs, assumptions, knowledge limits, human oversight, and third-party dependencies.

In practical terms, this is your intake and review step.

Before approving a use case, ask:

- What problem is this AI system solving?

- What data does it touch?

- Who uses the output?

- Could the output materially affect customers, employees, or regulated decisions?

- What happens if it is wrong?

- Is there a viable non-AI alternative?

This is also where shadow AI gets surfaced. Teams often assume they are requesting approval for a new initiative when they are actually disclosing a use case that is already active.

MEASURE: Move Beyond Performance Metrics

MEASURE is where many security teams default to technical testing alone. NIST is broader than that.

The framework calls for evaluating valid and reliable operation, safety, security and resilience, transparency and accountability, explainability, privacy, fairness, environmental impact, and emergent risks.

For a CISO, that means asking not only, “Does it work?” but also:

- Is it secure in the deployment context?

- Are access, logging, and monitoring in place?

- Is sensitive data exposure controlled?

- Can we explain the system well enough for its use case?

- Are we tracking incidents, overrides, and user complaints?

- Have we documented what we are not measuring?

This is where AI governance theater gets exposed. A team may have a successful pilot with strong productivity gains but no useful evidence on privacy, resilience, or human override.

If your organization is exploring AI use in security operations, our guide to AI-augmented SOCs is a useful parallel. It shows the same tension between speed, visibility, and governance.

MANAGE: Prioritize, Treat, Escalate

MANAGE is the decision layer after assessment. This is where you decide:

- Whether deployment should proceed

- Which risks need mitigation first

- Which risks can be accepted temporarily

- When residual risk is too high

- What incident response and rollback path applies

- When a system should be superseded, disengaged, or deactivated

This point matters more than most teams admit. NIST explicitly includes mechanisms to supersede, disengage, or deactivate AI systems with outcomes inconsistent with intended use. If your governance process has no stop button, it is incomplete.

This is also where incident response tabletop readiness becomes useful. You want leaders to practice these decisions before a real AI incident forces them.

Need a practical next step? Sekaurity helps teams turn framework language into operating models, review workflows, and board-ready artifacts through our AI governance and compliance engagements.

The CISO Artifact Set That Makes AI Governance Real

If you want your NIST AI RMF implementation to survive executive scrutiny, customer questionnaires, and audit pressure, focus on artifacts.

Artifacts turn governance discussions into evidence that leaders, auditors, and customers can understand quickly.

Here is the minimum viable artifact set we recommend.

- AI Inventory: Without an inventory, governance becomes guesswork. This is your source of truth for AI in the business, including internal systems, vendor AI capabilities and shadow AI. It should be easy to update and review on a defined cadence. AI inventory should include:

- use case name

- business owner

- system or vendor name

- data used or exposed

- decision impact level

- human oversight requirement

- review status

- AI Usage Policy: This defines acceptable and prohibited uses, approval thresholds, data handling rules, and responsibilities. A good policy does not try to sound comprehensive. It tries to be usable.

- Vendor AI Review Checklist: This is where third-party risk gets operationalized. Include questions on:

- Data retention and training use

- Access controls and logging

- Model transparency

- Subprocessors and dependencies

- Incident notification

- Human review expectations

- AI Risk Register and Heatmap: This translates use-case findings into executive language. It should make tradeoffs visible. Leaders should be able to scan it in under a minute.

- Board Briefing and Escalation Path: The board does not need a model card. It needs a readable oversight narrative. That usually includes:

- Where AI is in use

- Which use cases are highest risk

- What controls are in place

- What gaps remain

- Which decisions need leadership input

Real World Situation

In one recent enterprise deal, a customer sent an AI governance questionnaire late in the sales process and needed answers the next morning.

Because the organization had already established an AI inventory, usage policy, and vendor review checklist with Sekaurity’s support, the security team was able to respond quickly with clear evidence instead of scrambling for answers.

The deal kept moving.

Security became an enabler rather than a hurdle.

Common Failure Modes in NIST AI RMF for CISOs

The most common mistakes are not technical. They are organizational.

- Treating AI Governance as Compliance Theater: If the output is a polished policy and no one can explain the approval path, the program is not ready.

- Ignoring Third-Party and Shadow AI: Many organizations focus on internally developed AI and miss the faster-growing problem: AI capabilities embedded in software they already use.

- Measuring Productivity, Not Trustworthiness: Product teams often bring strong performance metrics. Governance requires broader evidence.

- No Defined Kill Switch: If a system behaves outside intended use, leaders need an agreed path to suspend it. NIST includes this for a reason.

- No Board-Ready Narrative: Security teams may understand the risk and still fail to get funding or alignment because they cannot explain it in business terms.

This is why strong security teams move from firefighting to foresight, which we wrote about in The 7 Habits of World-Class Security Teams.

A 90-Day NIST AI RMF Implementation Roadmap

You do not need to boil the ocean. You do need momentum.

Days 1-30: Establish Control

- Form the AI governance committee

- Define provisional AI risk appetite and review thresholds

- Launch an AI inventory effort across internal and third-party use cases

- Publish interim AI usage guidance for employees

- Identify high-risk use cases already in production

Days 31-60: Build the Evidence Layer

- Standardize intake and review questions using MAP concepts

- Define minimum evidence requirements for approval

- Create vendor AI review criteria with procurement and legal

- Build an AI risk register and executive heatmap

- Document escalation and suspension authority

Days 61-90: Operationalize and Report

- Start regular committee reviews

- Add monitoring expectations for approved use cases

- Review one high-risk use case end to end through Govern, Map, Measure, and Manage

- Prepare a board-ready update with risks, gaps, and next decisions

- Align the operating model to future-state goals such as ISO/IEC 42001 readiness where relevant

This phased approach also helps with budget. Gartner forecast global security and risk management spending at $240 billion in 2026, up 12% from 2025.

In that environment, boards are more likely to fund visible risk reduction than vague governance plans.

What Good Looks Like for the CISO

At the end of a strong first phase, you should be able to answer six questions with confidence:

- Where is AI already in use across the business?

- Which use cases are highest risk, and why?

- Who approves, monitors, and accepts AI risk?

- What evidence exists for production use cases?

- How are vendor AI capabilities reviewed?

- What is the escalation and exception path when a system performs outside intended use?

If you cannot answer those questions yet, that is not failure. It is your roadmap.

NIST designed AI RMF as a flexible framework, and the companion playbook exists to help organizations tailor suggested actions to their context.

The point is not to implement all 90-plus tasks at once. The point is to make AI risk governable.

Real World Situation

In late January, a newly appointed CISO discovered three separate AI workstreams across the company: one in product, one in legal, and one in IT. Each group assumed someone else owned the governance model.

Over the next 30 days, the organization did not try to build a perfect program. Instead, it focused on the fundamentals: a governance committee, an AI inventory, a review path, and a clear escalation model.

That alone moved the company from ambiguity to control.

Perfect maturity can wait. Ownership cannot.

Conclusion

For CISOs, the challenge is not deciding whether NIST AI RMF is useful. It is making the framework operational.

The practical path is straightforward. Start with governance, not tools. Define AI risk appetite and decision rights. Build the artifact set that makes oversight real. Then map use cases through Govern, Map, Measure, and Manage in a way leaders can understand and teams can follow.

The organizations that do this well will not be the ones with the longest policy packs. They will be the ones that can show where AI is used, what risk it creates, who owns the decisions, and how the business stays within its risk appetite.

If you need help building an AI governance operating model that is board-ready, risk-based, and aligned to NIST AI RMF, Book a Meeting. We can help you inventory AI use, map controls, define decision rights, and give leadership a narrative they can act on.